As per this announcement made on Feb 2021, Microsoft graph now provides option to have granular permissions level using Sites.Selected application permission for the AD application instead of granting permission for all the sites in the tenant. The permission Sites.Selected does not provide access to any SharePoint site collections for the application unless the AD application has been assigned with permission roles read or write by an Admin. On this post let us see how to grant a site permission (Read or Write) to an AD Application with Sites.Selected permission by using postman client. As of the time I am writing this post there is no user interface to assign permissions to specific site collections for the application.

Pre-Requisite:

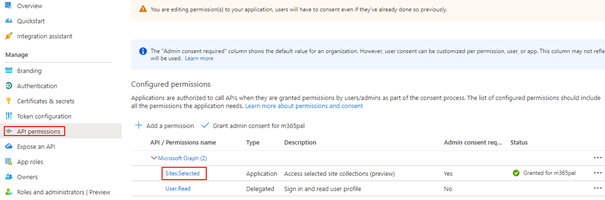

- Register Azure AD Application (APP 1) in Azure AD Portal with the following permissions

- Sites.Selected (Admin Consented)

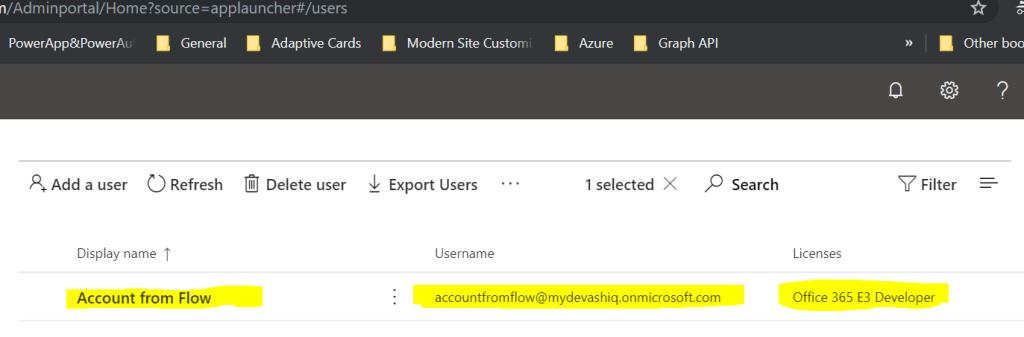

- Another AD Application (APP 2) with following permission only for the admins to assign selected roles to the above App

- Sites.FullControl.All (Admin Consented)

App Registration:

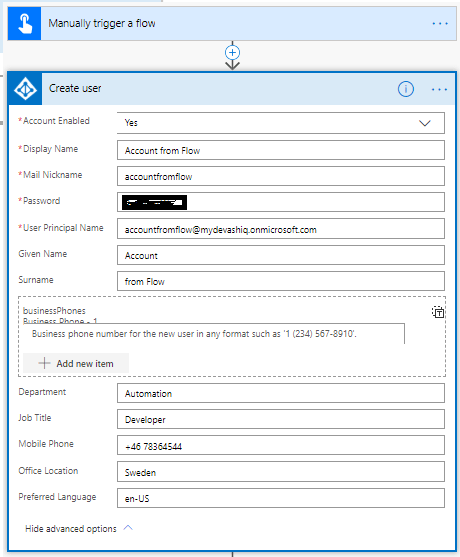

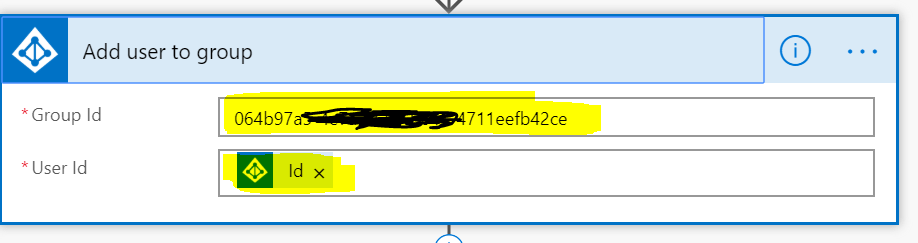

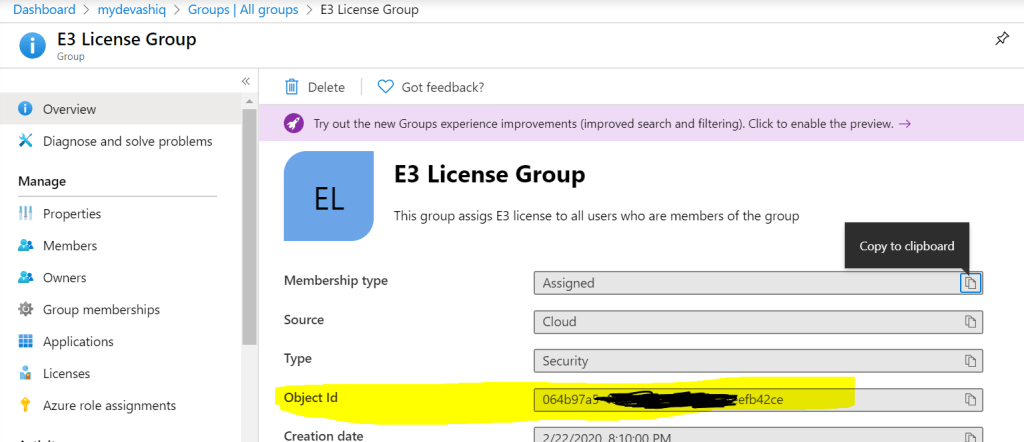

Start with registering the above said two Azure AD applications

APP 1:

Register an Azure AD application with the following permission

APP 2 (Admin App):

Another app for admins for granting roles to APP 1

Grant permission role to the SharePoint site for the Azure AD Application:

This step is grant permission for the Azure AD application with Sites.Selected application permission to a given site collection. Perform the following steps to grant the role (Read/Write or Read and Write) to the AD app (APP 1)

- Gather the Client ID, Tenant ID and Client secret of the admin app

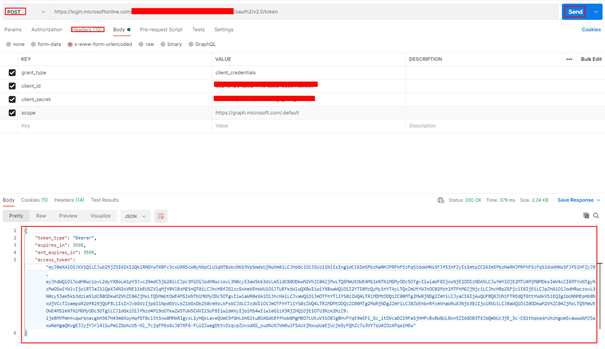

- In PostMan, make a HTTP request to generate the access token for the admin app – APP 2

Request Method: POST

Request URL: https://login.microsoftonline.com/yourtenantID/oauth2/v2.0/token

Request Header:

Key: Content-Type

Value: application/x-www-form-urlencoded

Request Body:

grant_type: client_credentials

scope: https://graph.microsoft.com/.default

client_id: adminappclientid

client_secret: adminappclientsecret

- Copy the access_token to be used for granting roles.

- Get the Client ID of the Azure AD Application – APP 1 with Sites.Selected permission

- Decide on the Role (Read or Write) for the granting the Site specific role for the APP 1 with Sites.Selected permission.

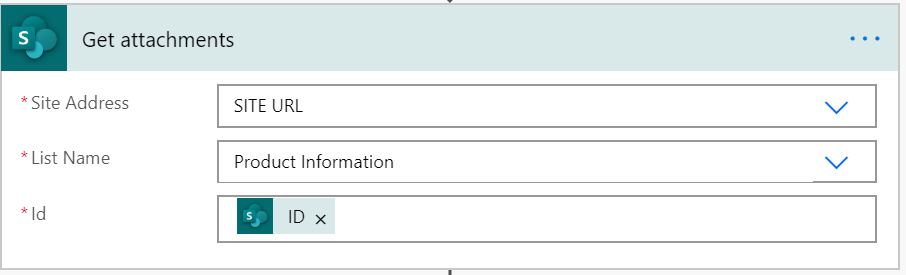

- Get the SiteId of the SharePoint site to be assigned permissions for the application (App 1). An easy way to get the siteId is by viewing the page source from the browser with the site open.

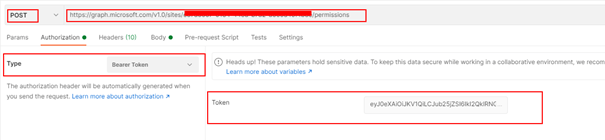

- In PostMan, make a HTTP request to grant the site role to the APP 1. Replace the siteId with the actual siteId which will be a guid

Request Method: POST

Request URL: https://graph.microsoft.com/v1.0/sites/siteId/permissions

Request Header:

Key: Content-Type

Value: application/json

Request Body: raw

Replace the id with APP 1 client id and the display name of the APP 1

{

"roles": ["write"],

"grantedToIdentities": [{

"application": {

"id": "xxxxxx-APP1GUID-4ad9-xxxx-4d36e68b0454",

"displayName": "AppNamewithSelectedPermissions-App1"

}

}]

}

- Paste the access token on the token box as shown below with Authorization type selected as Bearer Token

- Send the request for granting the role for APP 1. After the request is made the APP 1 with the Sites.Selected permission has access to the site with write role we have granted to. The same way you can assign app access to multiple SharePoint sites.

Grant the Role using PnP PowerShell:

There is a PnP PowerShell cmdlet to grant access to SharePoint site for the registered AD application with Sites.Selected permission. The command to grant permission can be executed by the Site Collection administrator after creating a connection to the site

Connect-PnPOnline https://tenantname.sharepoint.com/sites/siteName -InteractiveYou will be prompted to enter credentials including the second factor. After the connection is created, enter the following command to grant Write permission to the AD App

Grant-PnPAzureADAppSitePermission -AppId 'AzureAppIdwithSitesdotselectedpermission' -DisplayName 'App Name here' -Site 'https://tenantname.sharepoint.com/sites/sitename' -Permissions Write

To install PnP PowerShell module on the local workstation, enter the following command

Install-Module -Name PnP.PowerShellThere is also a PnP cmdlet to register an AD app in the Azure Active directory.

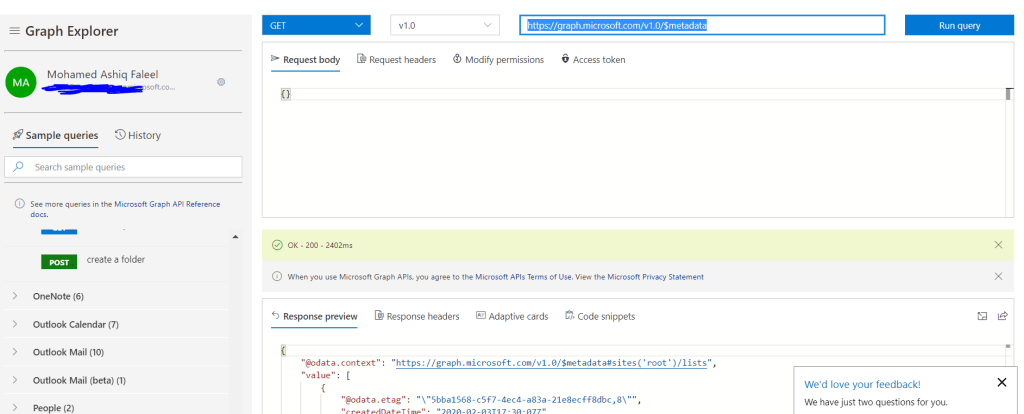

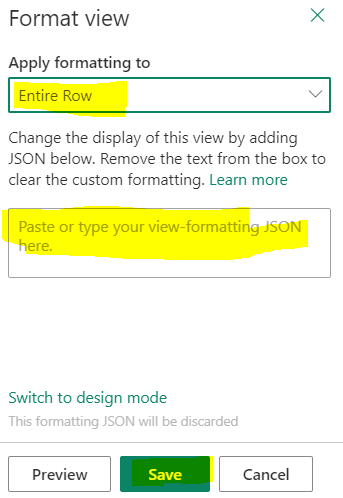

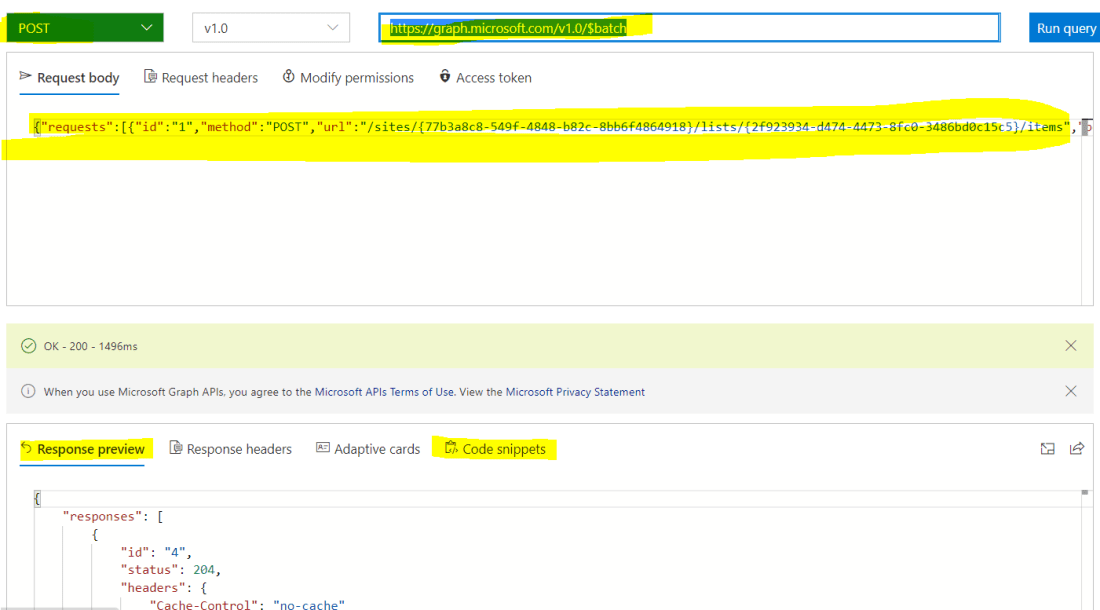

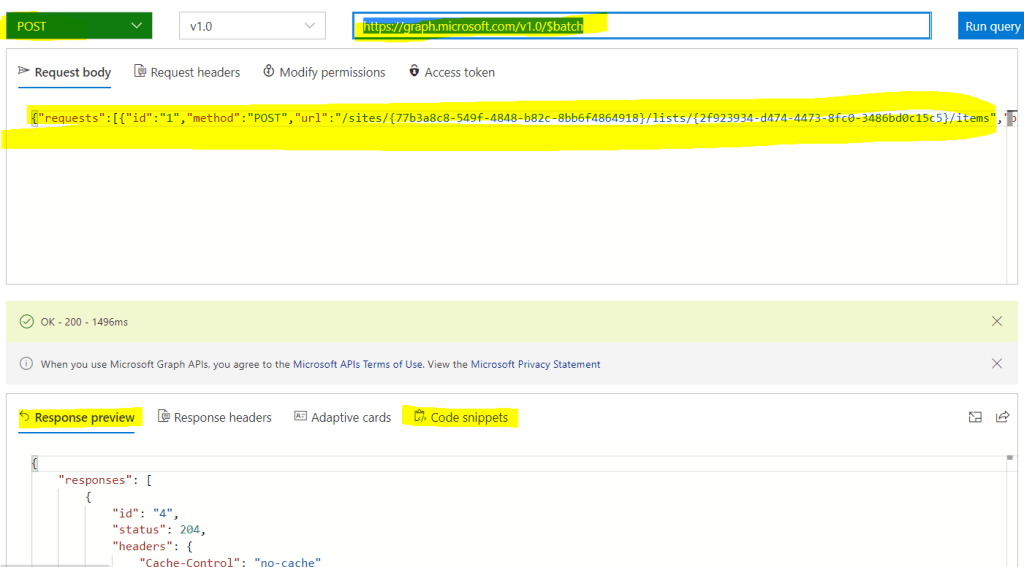

Grant the Role by an Admin using the Graph Explorer tool:

Role can also be assigned by an admin with out having the admin AD app (APP 2) using the graph explorer tool. This can be done only by an Admin

If there is any error related to permissions, make sure the admin consents to Sites.FullControl.All for the Graph tool. There is also an SPFx community webpart developed by a community member with User Interface for this operation

https://github.com/pnp/sp-dev-fx-webparts/tree/master/samples/react-sites-selected-admin

Reference:

Assign permission role programmatically: https://docs.microsoft.com/en-us/graph/api/site-post-permissions?view=graph-rest-1.0&tabs=csharp

Summary:

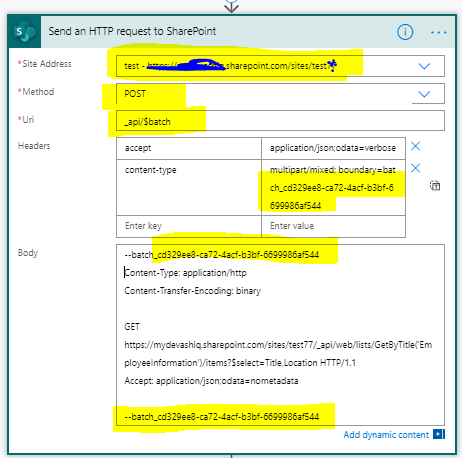

On this post we have seen how to grant access to Azure AD which has the Sites.Selected permission. You can also grant permission/role to an app with sites.selected permission programmatically. If you are using SharePoint API instead of Graph API in the Azure AD app registration, Sites.Selected is available on Application Permission as shown below

Hope you have found this informational & thanks for reading. If you are visiting my blog for the first time, please do look at my other blogposts.

Do you like this article?

Subscribe to my blog with your email address using the widget on the right side or on the bottom of this page to have new articles sent directly to your inbox the moment I publish them.